Even with the pandemic raging, natural disasters are having a busy 2020: tornadoes ravaged Nashville a few months ago; the chances of a new “big one” have dramatically risen in California’s fault zones; and meteorologists are anticipating a stronger-than-usual hurricane season for the U.S. More than ever, understanding and anticipating these events is crucial – and now, two teams of researchers have announced that they have used supercomputers to run the higher-resolution-ever simulations of tornadoes and earthquakes.

While researchers have understood the basics of tornado formation for some time, the particulars are difficult to work out – so difficult, in fact, that the National Weather Service has a 70 percent false alarm rate for tornado warnings. Leigh Orf, an atmospheric scientist with the University of Wisconsin-Madison’s Space Science and Engineering Center is on a quest to change that using the most detailed tornado simulations ever produced.

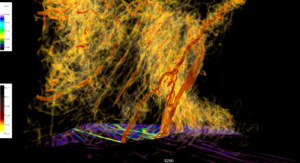

Using a piece of software he developed, Orf has simulating and visualizing fully resolved tornadoes and their parent supercells for a decade. To run these powerful simulations, Orf has used a variety of supercomputers – most recently, Frontera at the Texas Advanced Computing Center (TACC). Frontera delivers 23.5 Linpack petaflops of computing power, placing it 8th on the most recent Top500 list of the world’s most powerful publicly ranked supercomputers. With Frontera, Orf has been able to run simulations at high spatial and temporal resolutions – ten meters and a fifth of a second, respectively.

“It is only with this level of granularity that some features become evident,” Orf said in an interview with TACC’s Aaron Dubrow. “We need to throw a lot of computational power to get it right and resolve salient features. Ultimately, the goal is prediction, but the truth is, we still don’t understand some basic things about how supercell thunderstorms really work. … It’s really hard to answer questions like, ‘will this supercell that just formed produce a tornado, and if so, will it be especially violent?’”

Orf’s research has, to date, produced a variety of insights into the tornadogenesis process. When studying a deadly tornado event in Oklahoma, for instance, Orf found several characteristic features that might help explain how the tornadoes formed. “In these simulations, there’s a lot of spinning going on that you wouldn’t see with the naked eye,” he said. “That spinning is sometimes in the form of vortex sheets rolling up, or misocyclones, what you might call mini tornadoes, that aren’t quite tornado strength that spin along different boundaries in the storm.” Similarly, his simulations revealed that certain types of currents serve as driving forces for tornado intensity.

Now, with his allocation on Frontera, Orf is looking to re-simulate storms in a variety of conditions to see how minor variable changes might impact the formation or intensity of tornadoes. “Very small changes early on in the simulation can lead to very big changes in the simulation down the road,” he said. “This is an intrinsic predictability issue in our field. We’re doing some of the frontier work to try to tease out these variables.”

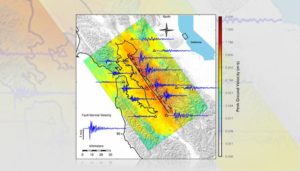

While Orf is looking to the sky, a team at Lawrence Livermore National Laboratory (LLNL) is looking to the ground. Using code developed at LLNL, the researchers simulated a magnitude 7.0 earthquake on the Hayward Fault, which runs along the San Francisco Bay Area. The new simulations ran at double the resolution of previous iterations, capturing seismic waves as short as 50 meters across the entire fault zone. These simulations, too, required extraordinary computing power: in this case, LLNL’s Sierra system, which delivers 94.6 Linpack petaflops, placing it third on the most recent Top500 list. The Sierra-based simulations were run during Sierra’s open science period in 2018, before it switched to classified work. The team also made use of LLNL’s Lassen system (an unclassified machine with similar architecture to Sierra), which delivers 18.2 Linpack petaflops and placed 14th.

“The [Institutional Center of Excellence] prepared computer codes at LLNL to run efficiently on Sierra and Lassen prior to their arrival so they could immediately take advantage of those capabilities when they came online, and this earthquake simulation and other science-based projects are achieving exactly what they were meant to do,” said Chris Clouse, associate program director for computational physics at LLNL, in an interview with LLNL’s Anne Stark.

“We used a recently developed empirical model to correct ground motions for the effects of soft soils not included in the Sierra calculations,” said Arthur Rodgers, a seismologist at LLNL. “These improved the realism of the simulated shaking intensities and bring the results in closer agreement with expected values.”

Now, with hurricane season beginning, eyes are turning to the wide range of weather and climate supercomputer centers – many of which have recently received large installations or investments – to see if the 2020 hurricane season can be more accurately anticipated.